Weekend project: making music with a laser cutter

I realized I could use my Grbl laser cutter to produce sounds. G-Code controls two stepper motors, that when spinning at the right speeds, can produce musical notes.

The first step was to find the frequency constant for my particular machine. I did this by moving an axis at a set speed and using a spectrum analyzer (in this case the Spectroid app for Android) to find the frequency it produces.

I obtained a constant of \(k = 1.337 \frac{\text{Hz}}{\text{mm/m}}\). This value can be multiplied by powers of two to shift the octave range of the instrument.

A frequecy \(f\) can be converted to a feed rate \(v\) and viceversa using this formula:

\[f = kv\]

Go was my programming language of choice. It’s good to work with a type checker and compiler backing you up, and I was sure there were libraries available for parsing MIDI files.

Since a laser cutter has two axis, the program assigns a MIDI track to each stepper motor. I designed the program so only one note can be played at a time per axis. There’s room for improvement by implementing rapid arpeggiation per axis.

The programs then joins the two tracks into an array containing three values: Frequencies for the x and y axis, and the duration. If one or both tracks are silent, the frequency is zero (no movement).

Turning frequencies into movement

The formula above works well when working with a single axis. However, G-Code commands accept a single feed rate so the machine can move to the given X Y coordinates at that speed. The motors might spin at different rates depending on the direction specified.

Given two frequencies, \(f_x\), \(f_y\) and a duration \(t\), the displacement \(s_x\), \(s_y\) and feed rate \(v\) can be obtained with the following equations:

\[v_x = f_x / k\]

\[v_y = f_y / k\]

\[s_x = v_x t\]

\[s_y = v_y t\]

\[v = \sqrt{v_x^2 + v_y^2}\]

Simple. Here are a few observations:

- When one of the channels is silent, movement will be either vertical or horizontal.

- If the two channels play the same note, the machine moves at a 45° angle.

- All the possible frequency ratios can be found between 0° and 90°.

- The sign of \(v_x\) and \(v_y\) doesn’t matter.

Now we can convert these values into G-Code. Specifically, with G1.

G1 X<s_x> Y<s_y> F<v>

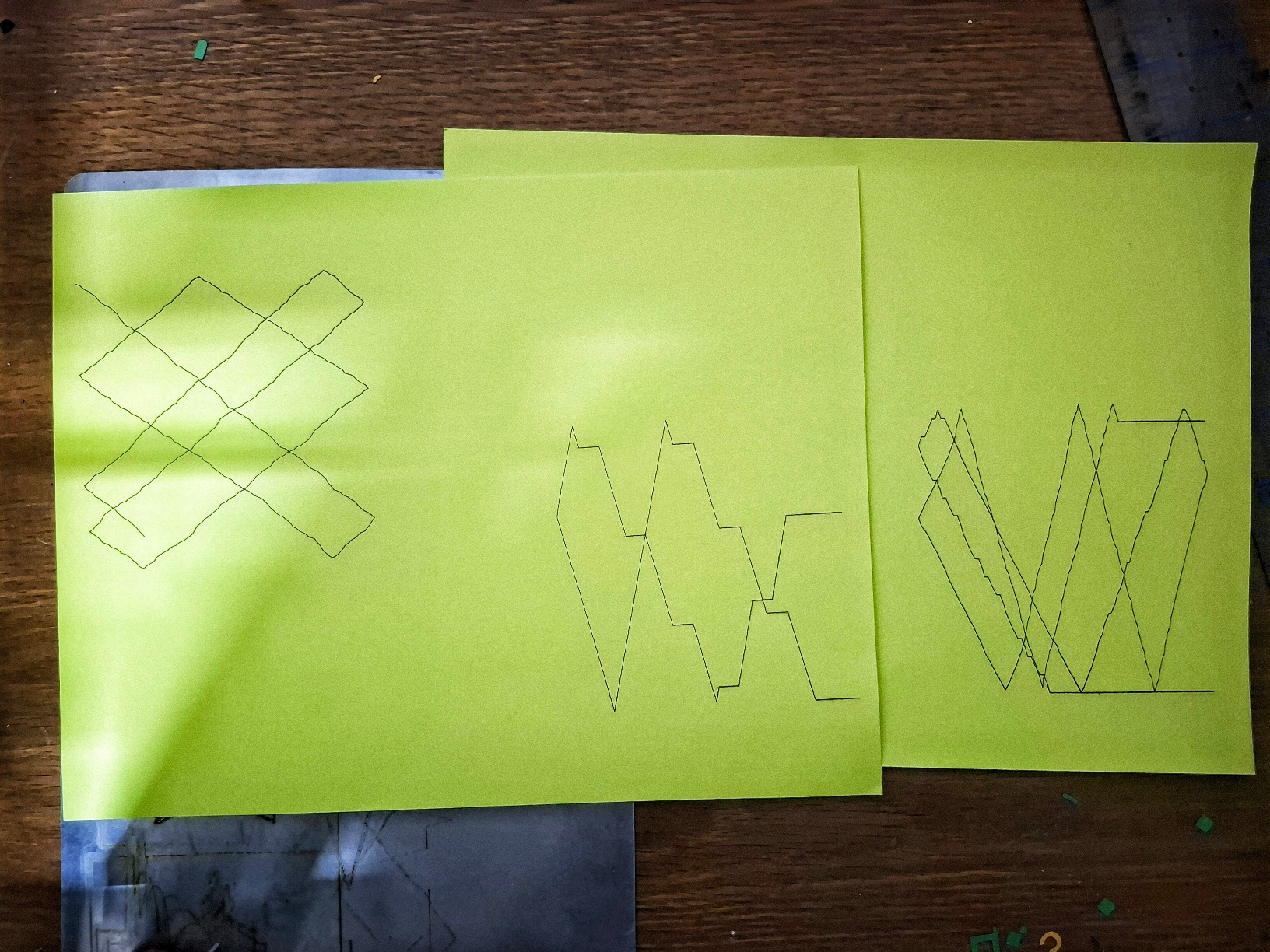

Because the machine will eventually be out of bounds if it keeps moving in the positive x and y axis, the program flips the direction of movement if it exceeds a certain distance (100mm in this case). This also creates a crude visualization of the music, which I traced using the laser.

Let’s test it

It’s rare when code that interacts with external hardware works for the first time. This time it did!

Source code can be found on GitHub.